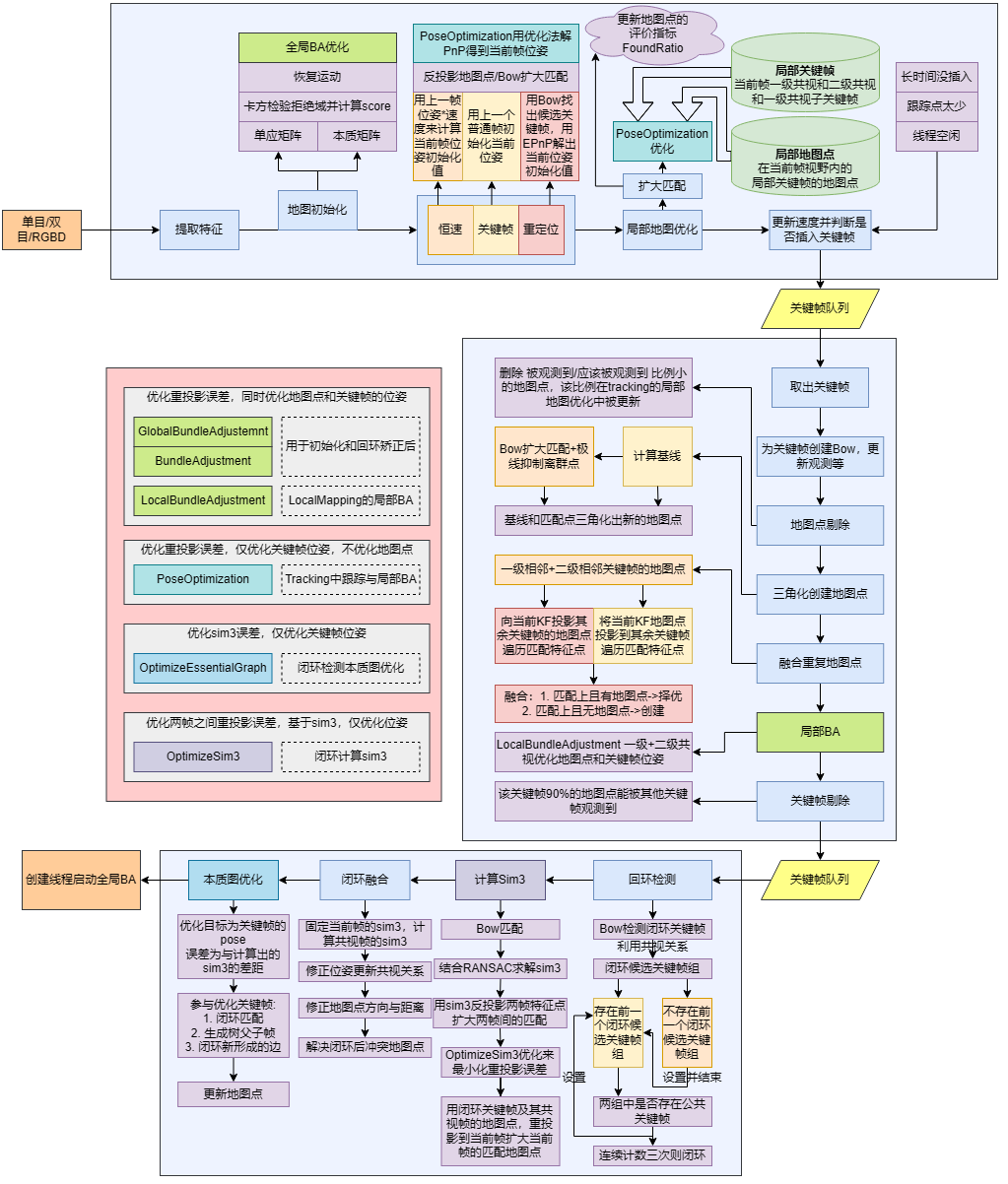

ORBSLAM2的跟踪器Tracking

跟踪器Tracking

Tracking的输入是普通帧,负责跟踪每一帧的位姿,并在恰当的时候插入关键帧。在SLAM系统的初始阶段,如果是单目相机会首先进行系统初始化,这时会确定单目SLAM的尺度;初始化完成后,会使用恒速模型与关键帧模型两种方法来初始化当前帧的位姿,并通过BA的方式解PnP来求解出当前帧的位姿;当然如果上述两种方法都跟踪失败了,系统会进行重定位,这时会通过Bow来选出重定位候选帧,使用EPnP来求解出当前帧的还算准确的位姿,并通过BA进一步解PnP优化出当前帧的位姿。值得一提的是,用于优化PnP的BA仅仅只会通过重投影误差优化当前帧的位姿,而不会优化地图点。

在求解出当前帧的位姿后,会使用帧的共视关系来创建局部地图,主要包括:

当前帧的一级共视关键帧

当前帧的一级共视关键帧的父子关键帧

当前帧的二级共视关键帧二级共视选共视关系最好的前10个)

这些帧的地图点就构成了局部地图点,局部地图点主要有两个作用:

更新地图点的评价指标,通过isInFrustum函数判断地图点是否在当前帧的视野内来增加对应地图点应该被观测到的次数,从而用于后续地图点的剔除

对当前帧扩大匹配,从而得到更多的匹配点用于后续的优化

扩大匹配完成后,就会仅优化当前帧的位姿,来更精确的完成求解。(这里提到的所有所有优化都是通过重投影误差来优化这一帧的位姿,参与优化的其他参数为地图点的3D坐标)

最后,还会判断判断是否需要插入关键帧。

初始化

这里的初始化仅仅是对Initializer类的小封装,当系统经过两帧后,会首先检测这两帧的特征点是否超过100个,如果超过就会尝试匹配,并通过Initializer类恢复位姿并设定初始尺度。三角化成功后就会将这两帧设置为关键帧同时更新地图,并进行全局BA,这会同时优化地图点和这两个关键帧的位姿以确保求解精准。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 void Tracking::MonocularInitialization () if (!mpInitializer) { if (mCurrentFrame.mvKeys.size () > 100 ) { mInitialFrame = Frame (mCurrentFrame); mLastFrame = Frame (mCurrentFrame); ... mpInitializer = new Initializer (mCurrentFrame, 1.0 , 200 ); ... return ; } } else { if ((int )mCurrentFrame.mvKeys.size () <= 100 ) { ... return ; } ORBmatcher matcher ( 0.9 , true ) int nmatches = matcher.SearchForInitialization ( mInitialFrame, mCurrentFrame, mvbPrevMatched, mvIniMatches, 100 ); if (nmatches < 100 ) { ... return ; } ... if (mpInitializer->Initialize ( mCurrentFrame, mvIniMatches, Rcw, tcw, mvIniP3D, vbTriangulated)) { for (size_t i = 0 , iend = mvIniMatches.size (); i < iend; i++) { if (mvIniMatches[i] >= 0 && !vbTriangulated[i]) { mvIniMatches[i] = -1 ; nmatches--; } } mInitialFrame.SetPose (cv::Mat::eye (4 , 4 , CV_32F)); ... CreateInitialMapMonocular (); } } } void Tracking::CreateInitialMapMonocular () KeyFrame *pKFini = new KeyFrame (mInitialFrame, mpMap, mpKeyFrameDB); KeyFrame *pKFcur = new KeyFrame (mCurrentFrame, mpMap, mpKeyFrameDB); pKFini->ComputeBoW (); pKFcur->ComputeBoW (); mpMap->AddKeyFrame (pKFini); mpMap->AddKeyFrame (pKFcur); for (size_t i = 0 ; i < mvIniMatches.size (); i++) { if (mvIniMatches[i] < 0 ) continue ; cv::Mat worldPos (mvIniP3D[i]) ; MapPoint *pMP = new MapPoint ( worldPos, pKFcur, mpMap); pKFini->AddMapPoint (pMP, i); pKFcur->AddMapPoint (pMP, mvIniMatches[i]); pMP->AddObservation (pKFini, i); pMP->AddObservation (pKFcur, mvIniMatches[i]); pMP->ComputeDistinctiveDescriptors (); pMP->UpdateNormalAndDepth (); mCurrentFrame.mvpMapPoints[mvIniMatches[i]] = pMP; mCurrentFrame.mvbOutlier[mvIniMatches[i]] = false ; mpMap->AddMapPoint (pMP); } pKFini->UpdateConnections (); pKFcur->UpdateConnections (); Optimizer::GlobalBundleAdjustemnt (mpMap, 20 ); float medianDepth = pKFini->ComputeSceneMedianDepth (2 ); float invMedianDepth = 1.0f / medianDepth; if (medianDepth < 0 || pKFcur->TrackedMapPoints (1 ) < 100 ) { cout << "Wrong initialization, reseting..." << endl; Reset (); return ; } cv::Mat Tc2w = pKFcur->GetPose (); Tc2w.col (3 ).rowRange (0 , 3 ) = Tc2w.col (3 ).rowRange (0 , 3 ) * invMedianDepth; pKFcur->SetPose (Tc2w); vector<MapPoint *> vpAllMapPoints = pKFini->GetMapPointMatches (); for (size_t iMP = 0 ; iMP < vpAllMapPoints.size (); iMP++) { if (vpAllMapPoints[iMP]) { MapPoint *pMP = vpAllMapPoints[iMP]; pMP->SetWorldPos (pMP->GetWorldPos () * invMedianDepth); } } mpLocalMapper->InsertKeyFrame (pKFini); mpLocalMapper->InsertKeyFrame (pKFcur); mCurrentFrame.SetPose (pKFcur->GetPose ()); mnLastKeyFrameId = mCurrentFrame.mnId; mpLastKeyFrame = pKFcur; mvpLocalKeyFrames.push_back (pKFcur); mvpLocalKeyFrames.push_back (pKFini); mvpLocalMapPoints = mpMap->GetAllMapPoints (); mpReferenceKF = pKFcur; mCurrentFrame.mpReferenceKF = pKFcur; mLastFrame = Frame (mCurrentFrame); mpMap->SetReferenceMapPoints (mvpLocalMapPoints); mpMapDrawer->SetCurrentCameraPose (pKFcur->GetPose ()); mpMap->mvpKeyFrameOrigins.push_back (pKFini); mState = OK; }

普通帧跟踪

每当一个普通帧到达时,会有限选择恒速模型进行跟踪;如果速度为空或刚刚完成重定位或恒速模型跟踪失败,那么才会使用关键帧模型进行跟踪;如果都跟踪失败,就只能进行重定位了。

恒速模型指的是:假设已知了第i-1帧和第i帧的位姿分别为i+1帧到达时,粗略估计第i+1帧的位姿为i帧和第i+1帧的相对位姿与第i-1帧和第i帧的相对位姿是一样的)

关键帧模型指的是:由于每个普通帧的参考关键帧都是最新的关键帧,因此可以用Bow匹配当前帧和参考关键帧的特征点,并通过匹配点数目,来判断当前帧是否真的能和参考关键帧对应的上 ,如果匹配点数目比较多,就认为当前普通帧与上一个普通帧应该大差不差,于是直接拿上一个普通帧的位姿来粗略估计当前帧的位姿

重定位指的是:由于恒速模型和关键帧模型都失败了,说明当前普通帧与最近一段的普通帧离的比较远了,只能尝试看看当前普通帧是否还在已经获取到的地图里,如果在的话还可以勉强拉回来(不在当前地图的话就没办法了)。这会使用Bow来匹配当前普通帧与所有关键帧,并选出重定位候选关键帧,同时使用EPnP算法来当前帧估计一个还算可靠的位姿。

值得一提的是,上面三种模型得到的位姿都只是"粗略估计",只是为接下来的优化提供一个可靠的初始值。在完成上述三种方法的"粗定位"后,会利用这个"粗定位"来进行扩大匹配,并使用扩大匹配后的匹配点构建一个PnP的优化,通过仅优化当前普通帧位姿的方式来得到一个"细定位"的结果。

在得到"细定位"的结果后会立马更新局部地图,并进行局部地图优化,关于局部地图前文已经提到一点了,后文会更加详细阐述。

恒速模型

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 bool Tracking::TrackWithMotionModel () ... mCurrentFrame.SetPose (mVelocity * mLastFrame.mTcw); ... int th; if (mSensor != System::STEREO) th = 15 ; else th = 7 ; int nmatches = matcher.SearchByProjection (mCurrentFrame, mLastFrame, th, mSensor == System::MONOCULAR); if (nmatches < 20 ) { fill (mCurrentFrame.mvpMapPoints.begin (), mCurrentFrame.mvpMapPoints.end (), static_cast <MapPoint *>(NULL )); nmatches = matcher.SearchByProjection (mCurrentFrame, mLastFrame, 2 * th, mSensor == System::MONOCULAR); } if (nmatches < 20 ) return false ; Optimizer::PoseOptimization (&mCurrentFrame); int nmatchesMap = 0 ; for (int i = 0 ; i < mCurrentFrame.N; i++) { if (mCurrentFrame.mvpMapPoints[i]) { if (mCurrentFrame.mvbOutlier[i]) { MapPoint *pMP = mCurrentFrame.mvpMapPoints[i]; mCurrentFrame.mvpMapPoints[i] = static_cast <MapPoint *>(NULL ); mCurrentFrame.mvbOutlier[i] = false ; pMP->mbTrackInView = false ; pMP->mnLastFrameSeen = mCurrentFrame.mnId; nmatches--; } else if (mCurrentFrame.mvpMapPoints[i]->Observations () > 0 ) nmatchesMap++; } } return nmatchesMap >= 10 ; }

关键帧模型

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 bool Tracking::TrackReferenceKeyFrame () mCurrentFrame.ComputeBoW (); ORBmatcher matcher (0.7 , true ) ; vector<MapPoint *> vpMapPointMatches; int nmatches = matcher.SearchByBoW ( mpReferenceKF, mCurrentFrame, vpMapPointMatches); if (nmatches < 15 ) return false ; mCurrentFrame.mvpMapPoints = vpMapPointMatches; mCurrentFrame.SetPose (mLastFrame.mTcw); Optimizer::PoseOptimization (&mCurrentFrame); int nmatchesMap = 0 ; for (int i = 0 ; i < mCurrentFrame.N; i++) { if (mCurrentFrame.mvpMapPoints[i]) { if (mCurrentFrame.mvbOutlier[i]) { MapPoint *pMP = mCurrentFrame.mvpMapPoints[i]; mCurrentFrame.mvpMapPoints[i] = static_cast <MapPoint *>(NULL ); mCurrentFrame.mvbOutlier[i] = false ; pMP->mbTrackInView = false ; pMP->mnLastFrameSeen = mCurrentFrame.mnId; nmatches--; } else if (mCurrentFrame.mvpMapPoints[i]->Observations () > 0 ) nmatchesMap++; } } return nmatchesMap >= 10 ; }

重定位

关于重定位候选帧是怎么获取的,前文已经阐述了,它会计算组得分不要忘了!

在重定位的过程中,会多次尝试扩大匹配,尽可能的挽救系统,如果实在匹配不上那也只能寄了。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 bool Tracking::Relocalization () mCurrentFrame.ComputeBoW (); vector<KeyFrame *> vpCandidateKFs = mpKeyFrameDB->DetectRelocalizationCandidates (&mCurrentFrame); ... int nCandidates = 0 ; for (int i = 0 ; i < nKFs; i++) { KeyFrame *pKF = vpCandidateKFs[i]; if (pKF->isBad ()) vbDiscarded[i] = true ; else { int nmatches = matcher.SearchByBoW (pKF, mCurrentFrame, vvpMapPointMatches[i]); if (nmatches < 15 ) { vbDiscarded[i] = true ; continue ; } else { PnPsolver *pSolver = new PnPsolver (mCurrentFrame, vvpMapPointMatches[i]); pSolver->SetRansacParameters ( 0.99 , 10 , 300 , 4 , 0.5 , 5.991 ); vpPnPsolvers[i] = pSolver; nCandidates++; } } } ... while (nCandidates > 0 && !bMatch) { for (int i = 0 ; i < nKFs; i++) { ... PnPsolver *pSolver = vpPnPsolvers[i]; cv::Mat Tcw = pSolver->iterate (5 , bNoMore, vbInliers, nInliers); ... if (!Tcw.empty ()) { Tcw.copyTo (mCurrentFrame.mTcw); ... for (int j = 0 ; j < np; j++) { if (vbInliers[j]) { mCurrentFrame.mvpMapPoints[j] = vvpMapPointMatches[i][j]; sFound.insert (vvpMapPointMatches[i][j]); } else mCurrentFrame.mvpMapPoints[j] = NULL ; } int nGood = Optimizer::PoseOptimization (&mCurrentFrame); if (nGood < 10 ) continue ; for (int io = 0 ; io < mCurrentFrame.N; io++) if (mCurrentFrame.mvbOutlier[io]) mCurrentFrame.mvpMapPoints[io] = static_cast <MapPoint *>(NULL ); if (nGood < 50 ) { int nadditional = matcher2.SearchByProjection ( mCurrentFrame, vpCandidateKFs[i], sFound, 10 , 100 ); if (nadditional + nGood >= 50 ) { nGood = Optimizer::PoseOptimization (&mCurrentFrame); if (nGood > 30 && nGood < 50 ) { sFound.clear (); for (int ip = 0 ; ip < mCurrentFrame.N; ip++) if (mCurrentFrame.mvpMapPoints[ip]) sFound.insert (mCurrentFrame.mvpMapPoints[ip]); nadditional = matcher2.SearchByProjection ( mCurrentFrame, vpCandidateKFs[i], sFound, 3 , 64 ); if (nGood + nadditional >= 50 ) { nGood = Optimizer::PoseOptimization (&mCurrentFrame); for (int io = 0 ; io < mCurrentFrame.N; io++) if (mCurrentFrame.mvbOutlier[io]) mCurrentFrame.mvpMapPoints[io] = NULL ; } } } } if (nGood >= 50 ) { bMatch = true ; break ; } } } } if (!bMatch) { return false ; } else { mnLastRelocFrameId = mCurrentFrame.mnId; return true ; } }

局部地图优化

前文提到,在求解出当前帧的"细定位"位姿后,会使用帧的共视关系来创建局部地图,并通过局部地图优化来求解"精确定位",其中局部地图主要包括:

当前帧的一级共视关键帧

当前帧的一级共视关键帧的父子关键帧

当前帧的二级共视关键帧二级共视选共视关系最好的前10个)

注意Tracking中的局部地图优化不要和LocalMapping中的局部BA搞混了:

Tracking中的局部地图优化过程参与优化的仅有当前普通帧和一些局部地图点,目的是通过扩大匹配的方式来进一步求解当前普通帧的"精确定位",仅仅只会优化当前普通帧的位姿,不会优化地图点的位姿

LocalMapping中的局部BA过程参与优化的是多个关键帧(一二级共视)和这些关键帧的地图点,目的是同时调整一大堆关键帧和地图点的位姿,会优化关键帧的位姿(不过二级共视关键帧不会优化位姿)和地图点的位姿

当获取到局部地图点后,会使用这些局部地图点来扩大匹配,同时调整每个地图点的指标(前文提到的通过isInFrustum函数判断地图点是否在当前帧的视野内来增加对应地图点应该被观测到的次数,从而用于后续地图点的剔除)。最后执行和恒速|关键帧|重定位的同款优化来解PnP求出当前普通帧的"精确定位"

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 bool Tracking::TrackLocalMap () UpdateLocalMap (); SearchLocalPoints (); Optimizer::PoseOptimization (&mCurrentFrame); mnMatchesInliers = 0 ; for (int i = 0 ; i < mCurrentFrame.N; i++) { if (mCurrentFrame.mvpMapPoints[i]) { if (!mCurrentFrame.mvbOutlier[i]) { mCurrentFrame.mvpMapPoints[i]->IncreaseFound (); if (!mbOnlyTracking) { if (mCurrentFrame.mvpMapPoints[i]->Observations () > 0 ) mnMatchesInliers++; } else mnMatchesInliers++; } ... } } if (mCurrentFrame.mnId < mnLastRelocFrameId + mMaxFrames && mnMatchesInliers < 50 ) return false ; if (mnMatchesInliers < 30 ) return false ; else return true ; }

关键帧插入条件

当跟踪完成后,会判断是否需要插入关键帧,满足以下条件就会插入:

当前帧的id与上次插入关键帧的id差距较大(很长时间没有插入关键帧)

满足插入关键帧的最小间隔并且localMapper处于空闲状态

和参考帧相比当前跟踪到的点太少;同时跟踪到的内点还不能太少

满足上述条件且LocalMapping不是很忙(因为插入是push到一个列表中,然后LocalMapping逐个pop并处理)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 bool Tracking::NeedNewKeyFrame () if (mbOnlyTracking) return false ; if (mpLocalMapper->isStopped () || mpLocalMapper->stopRequested ()) return false ; ... if (mCurrentFrame.mnId < mnLastRelocFrameId + mMaxFrames && nKFs > mMaxFrames) return false ; ... bool bLocalMappingIdle = mpLocalMapper->AcceptKeyFrames (); ... float thRefRatio = 0.75f ; if (nKFs < 2 ) thRefRatio = 0.4f ; if (mSensor == System::MONOCULAR) thRefRatio = 0.9f ; const bool c1a = mCurrentFrame.mnId >= mnLastKeyFrameId + mMaxFrames; const bool c1b = (mCurrentFrame.mnId >= mnLastKeyFrameId + mMinFrames && bLocalMappingIdle); const bool c1c = mSensor != System::MONOCULAR && (mnMatchesInliers < nRefMatches * 0.25 || bNeedToInsertClose); const bool c2 = ((mnMatchesInliers < nRefMatches * thRefRatio || bNeedToInsertClose) && mnMatchesInliers > 15 ); if ((c1a || c1b || c1c) && c2) { if (bLocalMappingIdle) { return true ; } else { mpLocalMapper->InterruptBA (); if (mSensor != System::MONOCULAR) { if (mpLocalMapper->KeyframesInQueue () < 3 ) return true ; else return false ; } else return false ; } } else return false ; }